Language Lounge

A Monthly Column for Word Lovers

The Meaning of Meaning

Warning: reading this column may result in a case of semantic satiation.

First, an anecdote. A couple of years ago I worked on a computer program to do things with verbs. It used a technique called machine learning, in which the program is "trained" to do something by being shown examples of right and wrong ways of doing it. The program was a verb sense classifier. In Natural Language Processing (NLP), that is a software program that recognizes which sense is intended when a computer processing text comes across a polysemous verb (that is, one with multiple meanings). In other words, if a computer is reading the sentence "The March gilt future settled 5 ticks higher at 114.09, braking two days of hefty falls but trailing the bund future by 15 ticks," it needs to know that settle in this sentence means something like "come to rest" and not "establish in residence". Of course, it helps to know that the ticks in this sentence are equidistant marks on a reference scale, not parasitic arachnids with specialized mouthparts for sucking. You know that already, just by reading the sentence. That's because you're a human. I'll return to that point in a bit.

In order to train a classifier, you first supply it with sentences that have been annotated by humans with the verbs in them mapped to particular senses from a dictionary. The idea is that if you feed the computer enough correct examples of something, it will learn from those examples and be able to recognize and distinguish a pattern on its own. My part in this enterprise was collecting sample sentences from corpora, assigning them to human annotators to mark, and then judging, in the case of disagreements among annotators, which sense a particular verb instance actually represented.

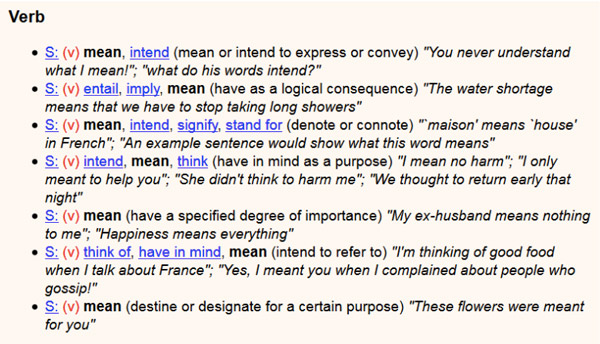

An interesting case arose with the verb mean. The sense inventory (that is, the list of definitions) that the annotators worked with is the same one you see in the Visual Thesaurus: seven senses representing various shades of meaning of mean.

What we found was that human annotators were unable to agree consistently which sense of mean was actually represented in various sentences. Or to put that in more technical terms, the interannotator agreement (IAA) in our training data was too low to be of use in helping the verb classifier to disambiguate mean. If humans can't make a distinction consistently, you can't expect, in most cases, that computers will be able to. They only do our bidding.

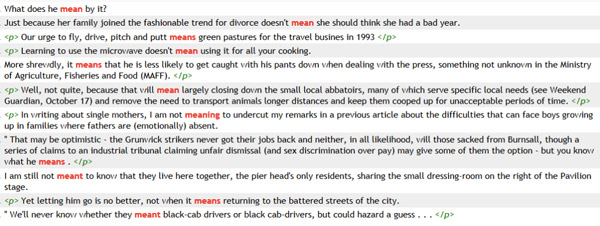

We found that we had to simplify the sense inventory in order to get usable results. Human annotators were very clear when mean meant "signify," e.g., "Maison means 'house' in French." They were also very clear when mean meant "intend," e.g., "I meant to get up earlier this morning." But for all other senses there was great agonizing and little agreement because, as is the case with many verbs, the borders between what dictionaries deem to be separate senses are fuzzy, and one sense bleeds into another in such a way that a given sentence may seem to exemplify either of two "separate" senses of a verb perfectly well, depending on how you interpret the sentence or the definition. To get a grip on the task, ask yourself: to what sense would you assign mean in any of this handful of sentences?

What we ask computers to do when they process text is in some ways harder than what you have to do. When you read or hear a sentence, your job is to understand it. Having done that, you don't have to consciously match the verb in the sentence to a definition in a mental sense inventory; that would be cumbersome and time-wasting. But since computers cannot really understand (because, as of now, they are not conscious and they are not a faculty comparable to a mind), they can only simulate some of the things we do with language: answer questions, translate, summarize, or make a guess about whether two sentences with different words contain roughly the same information, for example.

Our compromise in training the computer program to interpret mean was to instruct it to recognize only two senses, the "signify" sense and the "intend" sense. We sacrificed some precision for accuracy, and the verb classifier was then able to distinguish broadly between mean "signify" and mean "intend", throwing any sentence that contained mean into one category or the other.

If this seems rather crude and oversimplified to you, that's again because you are a human, and you are endowed with the great gift that we have so far not been successful in bequeathing to computers: you understand the meaning of meaning. How do you come to know the meaning of mean, and to be comfortable with the notion of meaning? How is it that we are all completely on board with the very peculiar phenomenon in which sounds we make by flapping bits of meat in our mouths and the graphical representations of these on a page contribute anything at all to meaning and the exchange of information? Long answers to these questions fill books. The short answer is that you are uniquely wired to deal with the amazing complexity of language as if it were child's play. No conceivable amount of wiring is going to give computers the ability to do with language what humans do with language.

We have sense organs, in training over a lifetime, that begin to work together early in childhood, enabling us to associate particular confluences of stimuli with concepts that have labels, or names. Though none of us may remember it, we might well have learned that cotton referred to a soft, white, fluffy substance when a parent daubed a bit of it on a cut or scrape. And we might have learned that rotten referred to things that were once good or usable but now are not, because they give off a bad smell. And we know, as well, that it's arbitrary that cotton and rotten rhyme in English; they probably don't in other languages. If you learn these words in another language, you will know not only the words; you already know all of the words' affordances, that is, all of the qualities associated with the word's meaning.

A computer, on the other hand, never felt the soft touch of cotton on its cheek, nor was it ever repulsed by a smell coming from a plastic container at the back of the fridge; computers know nothing of affordances except what they might develop through relentless, repetitive exposure. A computer might even "think" that cotton and rotten had something to do with each other, since their symbolic representation is only minutely different. Through training and successively refined inputs of data, a computer may eventually "know" that cotton belongs with words like linen, silk, and rayon; and that rotten is sometimes similar to "decayed" and sometimes to "lousy". But a computer is never going to know what cotton is, or that rotten things are repugnant.

The absorption of meaning in our lives is inextricably tied to sensory experience, and in many cases, to our emotional responses to sensory experience. Our determination of when and how a word, event, or sentence means something is an accretion of these experiences over our lifetimes, and since all of our experiences are unique to some degree, we draw different kinds and amounts of meaning from the things around us. I think this is why humans struggle to match particular instances of mean in sentences to definitions of mean in dictionaries: our interpretation of meaning is contextual and personal, and our determination of what mean means is somehow clear (we understand it) while also being fuzzy (we can't always match it to a list of things that mean means). A computer's interpretation of meaning, at best, is the result of pattern-matching, trial-and-error, and human intervention. Computers only have experience of the representations of things, not of the things themselves; theirs is a shadow world of symbols in which they can only approximate that meaning has any meaning at all.